|

Reference some actual academic articles, not blog posts as I have.

Instead, you should report and interpret the 95% confidence intervals (see section 3.7 of the book I have referenced for tips on reporting non-significant results).

My recommendation is that you respond to the reviewer in a respectful way saying that this statistical literature has definitively said that a post hoc power calculation is not appropriate. You should be able to find a few other papers to support your claim that a post hoc power calculation doesn't tell you anything the p-value doesn't. We document that the problem is extensive and present arguments to demonstrate the flaw in the logic. This approach, which appears in various forms, is fundamentally flawed. Such post-experiment power calculations claim the calculations should be used to aid in the interpretation of theĮxperimental results. ShapiroWilk test was applied to verify the normality of data. Obtains a statistically nonsignicant result. Logistic regression analysis was performed to predict the probability occurrence of BP. There is also a large literature advocating that power calculations be made when-Įver one performs a statistical test of a hypothesis and one It is well known that statistical power calculations can be

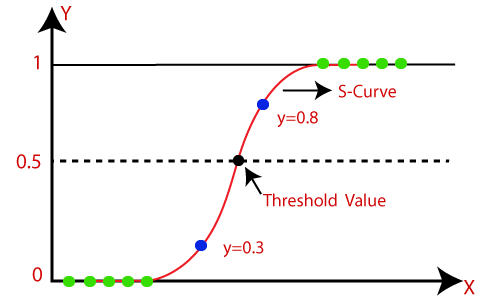

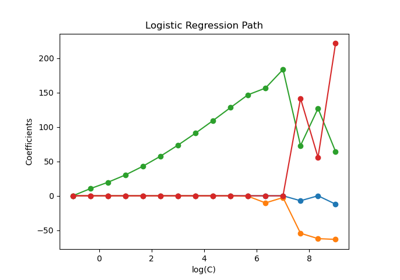

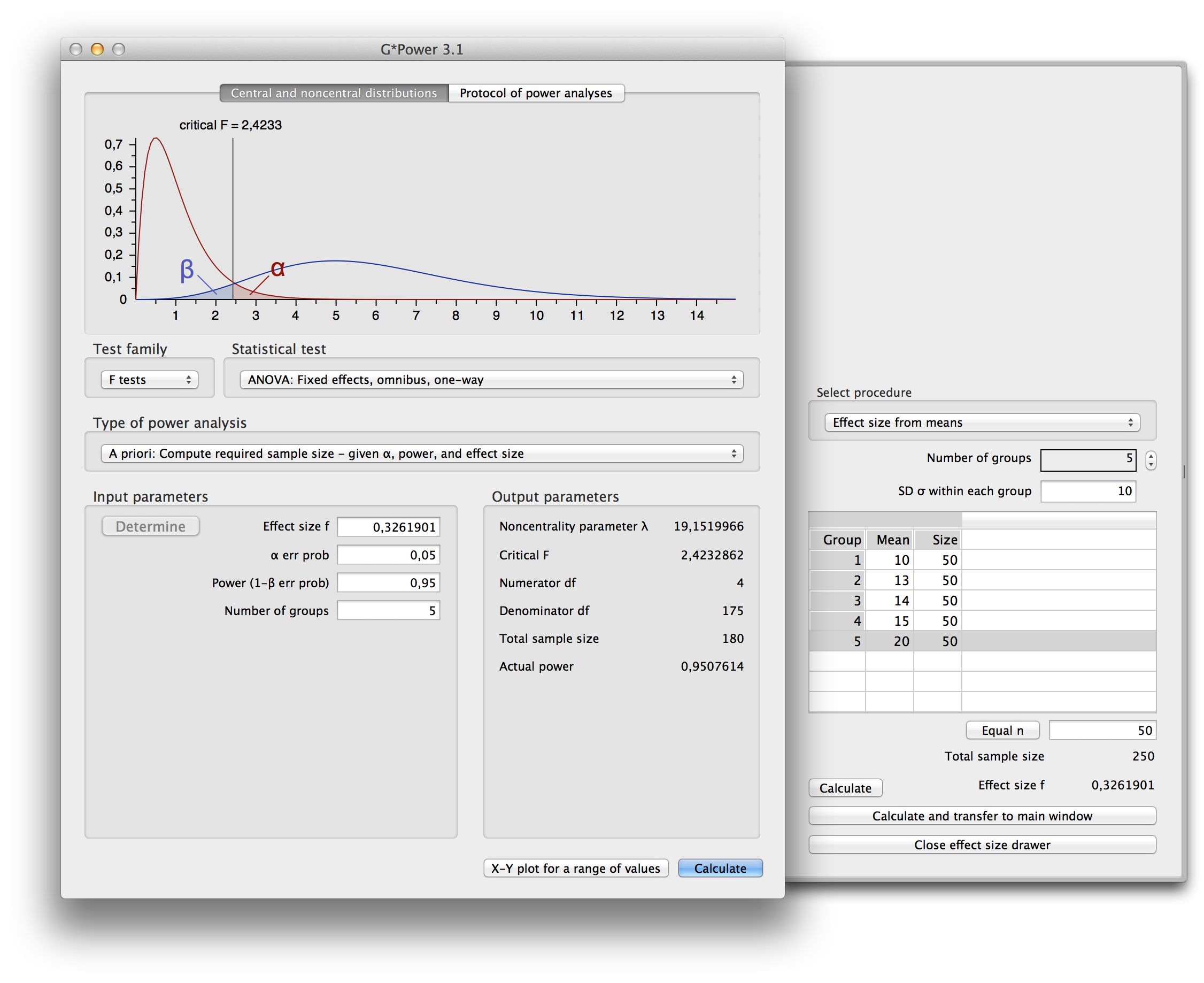

I don't mean to sound flippant, but a post hoc power analysis is really useless at this point (see here or here or I think chapter 3 or 4 in this book).ĮDIT: That book I linked to references this paper. 40 in the "Effect size f" box in G*Power and select ANCOVA to calculate power. If, for example, the effect size is large, plug. Estimate effect size (i.e., small, medium, large) based on the f2 value. Use the value to calculate R2 and use the R2 value to calculate Cohen's f2. A meta-analysis study suggested that the mean correlation of the effect in question is about 5. P.s.) I am just wondering what follows is a valid alternative method: I found that power analysis simulation using R could be a solution, but unfortunately I don't have enough knowledge about R to adapt the code provide here ( Simulation of logistic regression power analysis - designed experiments) to my study design.Īny help or suggestions would be greatly appreciated. I tried to conduct power analysis using G*Power, but because there are no true values of Pr(Y=1|X=1)H0 and Pr(Y=1|X=1)H1 as I'm dealing with political perception as the dependent variable, I couldn't do the analysis. The results supported the hypothesis, but one of the reviewers recommended conducting power analysis, saying that the non-significant differences between control and group A, and between control and group B might be a result of lack of power. I tested this hypothesis by running a logistic regression model with 3 dummy variables with the control group being the baseline group, along with 3 control variables. The hypothesis is that group A and B do NOT differ from the control group, but group C does. I have four groups: Control, (Treatment) A, B, and C. Cardiometry Issue 25 December 2022 p.1731-1737 DOI: 10.18137/cardiometry.Per reviewer request, I need to do power analysis for a logistic regression model with multiple dummy variables. Heart Disease Prediction Based on Age Detection Using Logistic Regression over Random Forest. It can be also considered a better option for Heart Disease Prediction.

Conclusion: The Logistic Regression model is significantly better than the Random Forest in Heart Disease Prediction. The statistical significance difference is 0.01 (p<0.05). Results: The Logistic Regression achieved improved accuracy of 91.60 then the Random Forest in Heart Disease Prediction. Each group consists of a sample size of 10 and the study parameters include alpha value 0.01, beta value 0.2, and the Gpower value of 0.8. Materials and Methods: This study contains 2 groups i.e Logistic Regression and Random Forest. Aim: To improve the accuracy in Heart Disease Prediction using Logistic Regression and Random Forest.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed